In “SQL: The Common Solvent for REST APIs” we noticed how Steampipe’s suite of open supply plug-ins that translate REST API calls instantly into SQL tables. These plug-ins had been, till not too long ago, tightly certain to the open supply engine and to the occasion of Postgres that it launches and controls. That led members of the Steampipe group to ask: “Can we use the plug-ins in our personal Postgres databases?” Now the reply is sure—and extra—however let’s concentrate on Postgres first.

NOTE: Every Steampipe plugin ecosystem is now additionally a standalone foreign-data-wrapper extension for Postgres, a virtual-table extension for SQLite, and an export device.

Be taught sooner. Dig deeper. See farther.

Utilizing a Steampipe Plugin as a Standalone Postgres Overseas Knowledge Wrapper (FDW)

Go to Steampipe downloads to search out the installer to your OS, and run it to amass the Postgres FDW distribution of a plugin—on this case, the GitHub plugin. It’s one in all (presently) 140 plug-ins out there on the Steampipe hub. Every plugin supplies a set of tables that map API calls to database tables—within the case of the GitHub plugin, 55 such tables. Every desk can seem in a FROM or JOIN clause; right here’s a question to pick out columns from the GitHub difficulty, filtering on a repository and writer.

choose

state,

updated_at,

title,

url

from

github_issue

the place

repository_full_name="turbot/steampipe"

and author_login = 'judell'

order by

updated_at descFor those who’re utilizing Steampipe, you possibly can set up the GitHub plugin like this:

steampipe plugin set up githubthen run the question within the Steampipe CLI or in any Postgres shopper that may connect with Steampipe’s occasion of Postgres.

However if you wish to do the identical factor in your personal occasion of Postgres, you possibly can set up the plugin otherwise.

$ sudo /bin/sh -c "$(

curl -fsSL

Enter the plugin identify: github

Enter the model (newest):

Found:

- PostgreSQL model: 14

- PostgreSQL location: /usr/lib/postgresql/14

- Working system: Linux

- System structure: x86_64

Primarily based on the above, steampipe_postgres_github.pg14.linux_amd64.tar.gz

might be downloaded, extracted and put in at: /usr/lib/postgresql/14

Proceed with putting in Steampipe PostgreSQL FDW for model 14 at

/usr/lib/postgresql/14?

- Press 'y' to proceed with the present model.

- Press 'n' to customise your PostgreSQL set up listing

and choose a distinct model. (Y/n):

Downloading steampipe_postgres_github.pg14.linux_amd64.tar.gz...

###############################################################

############################ 100.0%

steampipe_postgres_github.pg14.linux_amd64/

steampipe_postgres_github.pg14.linux_amd64/steampipe_postgres_

github.so

steampipe_postgres_github.pg14.linux_amd64/steampipe_postgres_

github.management

steampipe_postgres_github.pg14.linux_amd64/steampipe_postgres_

github--1.0.sql

steampipe_postgres_github.pg14.linux_amd64/set up.sh

steampipe_postgres_github.pg14.linux_amd64/README.md

Obtain and extraction accomplished.

Putting in steampipe_postgres_github in /usr/lib/postgresql/14...

Efficiently put in steampipe_postgres_github extension!

Information have been copied to:

- Library listing: /usr/lib/postgresql/14/lib

- Extension listing: /usr/share/postgresql/14/extension/Now connect with your server as common, utilizing psql or one other shopper, most sometimes because the postgres person. Then run these instructions, that are typical for any Postgres overseas knowledge wrapper. As with all Postgres extensions, you begin like this:

CREATE EXTENSION steampipe_postgres_fdw_github;To make use of a overseas knowledge wrapper, you first create a server:

CREATE SERVER steampipe_github FOREIGN DATA WRAPPER

steampipe_postgres_github OPTIONS (config 'token="ghp_..."');Use OPTIONS to configure the extension to make use of your GitHub entry token. (Alternatively, the usual atmosphere variables used to configure a Steampipe plugin—it’s simply GITHUB_TOKEN on this case—will work when you set them earlier than beginning your occasion of Postgres.)

The tables supplied by the extension will dwell in a schema, so outline one:

CREATE SCHEMA github;Now import the schema outlined by the overseas server into the native schema you simply created:

IMPORT FOREIGN SCHEMA github FROM SERVER steampipe_github INTO github;Now run a question!

The overseas tables supplied by the extension dwell within the github schema, so by default you’ll seek advice from tables like github.github_my_repository. For those who set search_path="github", although, the schema turns into elective and you may write queries utilizing unqualified desk names. Right here’s a question we confirmed final time. It makes use of the GitHub_search_repository which encapsulates the GitHub API for looking out repositories.

Suppose you’re searching for repos associated to PySpark. Right here’s a question to search out repos whose names match “pyspark” and report just a few metrics that can assist you gauge exercise and recognition.

choose

name_with_owner,

updated_at, -- how not too long ago up to date?

stargazer_count -- how many individuals starred the repo?

from

github_search_repository

the place

question = 'pyspark in:identify'

order by

stargazer_count desc

restrict 10;

+---------------------------------------+------------+---------------+

|name_with_owner |updated_at |stargazer_count|

+---------------------------------------+------------+---------------+

| AlexIoannides/pyspark-example-project | 2024-02-09 | 1324 |

| mahmoudparsian/pyspark-tutorial | 2024-02-11 | 1077 |

| spark-examples/pyspark-examples | 2024-02-11 | 1007 |

| palantir/pyspark-style-guide | 2024-02-12 | 924 |

| pyspark-ai/pyspark-ai | 2024-02-12 | 791 |

| lyhue1991/eat_pyspark_in_10_days | 2024-02-01 | 719 |

| UrbanInstitute/pyspark-tutorials | 2024-01-21 | 400 |

| krishnaik06/Pyspark-With-Python | 2024-02-11 | 400 |

| ekampf/PySpark-Boilerplate | 2024-02-11 | 388 |

| commoncrawl/cc-pyspark | 2024-02-12 | 361 |

+---------------------------------------+------------+---------------+When you have a whole lot of repos, the primary run of that question will take just a few seconds. The second run will return outcomes immediately, although, as a result of the extension features a highly effective and complex cache.

And that’s all there may be to it! Each Steampipe plugin is now additionally a overseas knowledge wrapper that works precisely like this one. You may load a number of extensions so as to be a part of throughout APIs. In fact, you possibly can be a part of any of those API-sourced overseas tables with your personal Postgres tables. And to save lots of the outcomes of any question, you possibly can prepend “create desk NAME as” or “create materialized view NAME as” to a question to persist outcomes as a desk or view.

Utilizing a Steampipe Plugin as a SQLite Extension That Gives Digital Tables

Go to Steampipe downloads to search out the installer to your OS and run it to amass the SQLite distribution of the identical plugin.

$ sudo /bin/sh -c "$(curl -fsSL

Enter the plugin identify: github

Enter model (newest):

Enter location (present listing):

Downloading steampipe_sqlite_github.linux_amd64.tar.gz...

############################################################

################ 100.0%

steampipe_sqlite_github.so

steampipe_sqlite_github.linux_amd64.tar.gz downloaded and

extracted efficiently at /dwelling/jon/steampipe-sqlite.Right here’s the setup, and you may place this code in ~/.sqliterc if you wish to run it each time you begin sqlite.

.load /dwelling/jon/steampipe-sqlite/steampipe_sqlite_github.so

choose steampipe_configure_github('

token="ghp_..."

');Now you possibly can run the identical question as above. Right here, too, the outcomes are cached, so a second run of the question might be immediate.

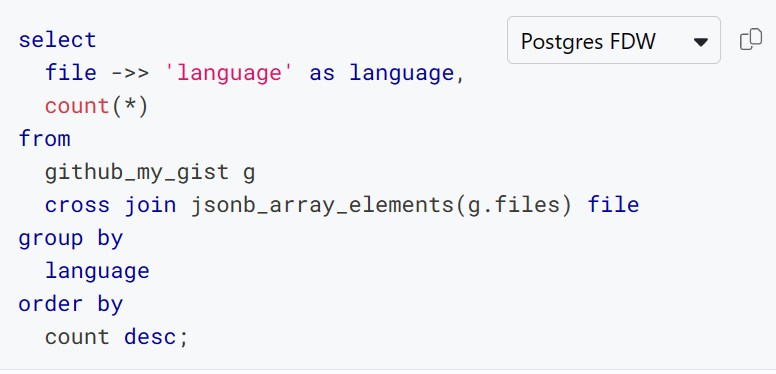

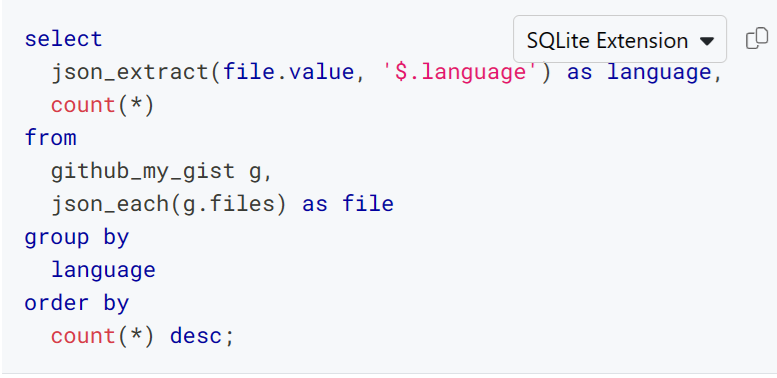

What concerning the variations between Postgres-flavored and SQLite-flavored SQL? The Steampipe hub is your buddy! For instance, listed here are Postgres and SQLite variants of a question that accesses a discipline inside a JSON column so as to tabulate the languages related along with your gists.

Postgres

SQLite

The github_my_gist desk stories particulars about gists that belong to the GitHub person who’s authenticated to Steampipe. The language related to every gist lives in a JSONB column referred to as information, which comprises a listing of objects like this.

{

"dimension": 24541,

"kind": "textual content/markdown",

"raw_url": "

"filename": "steampipe-readme-update.md",

"language": "Markdown"

}The features wanted to mission that listing as rows differ: in Postgres you utilize jsonb_array_elements and in SQLite it’s json_each.

As with Postgres extensions, you possibly can load a number of SQLite extensions so as to be a part of throughout APIs. You may be a part of any of those API-sourced overseas tables with your personal SQLite tables. And you’ll prepend create desk NAME as to a question to persist outcomes as a desk.

Utilizing a Steampipe Plugin as a Standalone Export Instrument

Go to Steampipe downloads to search out the installer to your OS, and run it to amass the export distribution of a plugin—once more, we’ll illustrate utilizing the GitHub plugin.

$ sudo /bin/sh -c "$(curl -fsSL

Enter the plugin identify: github

Enter the model (newest):

Enter location (/usr/native/bin):

Created momentary listing at /tmp/tmp.48QsUo6CLF.

Downloading steampipe_export_github.linux_amd64.tar.gz...

##########################################################

#################### 100.0%

Deflating downloaded archive

steampipe_export_github

Putting in

Making use of obligatory permissions

Eradicating downloaded archive

steampipe_export_github was put in efficiently to

/usr/native/bin

$ steampipe_export_github -h

Export knowledge utilizing the github plugin.

Discover detailed utilization data together with desk names,

column names, and examples on the Steampipe Hub:

Utilization:

steampipe_export_github TABLE_NAME [flags]

Flags:

--config string Config file knowledge

-h, --help assist for steampipe_export_github

--limit int Restrict knowledge

--output string Output format: csv, json or jsonl

(default "csv")

--select strings Column knowledge to show

--where stringArray the place clause knowledgeThere’s no SQL engine within the image right here; this device is only an exporter. To export all of your gists to a JSON file:

steampipe_export_github github_my_gist --output json > gists.jsonTo pick out just some columns and export to a CSV file:

steampipe_export_github github_my_gist --output csv --select

"description,created_at,html_url" > gists.csvYou need to use --limit to restrict the rows returned and --where to filter them, however largely you’ll use this device to rapidly and simply seize knowledge that you simply’ll therapeutic massage elsewhere, for instance, in a spreadsheet.

Faucet into the Steampipe Plugin Ecosystem

Steampipe plug-ins aren’t simply uncooked interfaces to underlying APIs. They use tables to mannequin these APIs in helpful methods. For instance, the github_my_repository desk exemplifies a design sample that applies persistently throughout the suite of plug-ins. From the GitHub plugin’s documentation:

You may personal repositories individually, or you possibly can share possession of repositories with different folks in a corporation. The

github_my_repositorydesk will listing repos that you simply personal, that you simply collaborate on, or that belong to your organizations. To question ANY repository, together with public repos, use thegithub_repositorydesk.

Different plug-ins comply with the identical sample. For instance, the Microsoft 365 plugin supplies each microsoft_my_mail_message and microsoft_mail_message, and the plugin supplies googleworkspace_my_gmail_message and googleworkspace_gmail. The place doable, plug-ins consolidate views of assets from the angle of an authenticated person.

Whereas plug-ins sometimes present tables with fastened schemas, that’s not all the time the case. Dynamic schemas, carried out by the Airtable, CSV, Kubernetes, and Salesforce plug-ins (amongst others) are one other key sample. Right here’s a CSV instance utilizing a standalone Postgres FDW.

IMPORT FOREIGN SCHEMA csv FROM SERVER steampipe_csv INTO csv

OPTIONS(config 'paths=["/home/jon/csv"]');Now all of the .csv information in /dwelling/jon/csv will automagically be Postgres overseas tables. Suppose you retain monitor of legitimate house owners of EC2 cases in a file referred to as ec2_owner_tags. Right here’s a question towards the corresponding desk.

choose * from csv.ec2_owner_tags;

proprietor | _ctx

----------------+----------------------------

Pam Beesly | {"connection_name": "csv"}

Dwight Schrute | {"connection_name": "csv"}You possibly can be a part of that desk with the AWS plugin’s aws_ec2_instance desk to report proprietor tags on EC2 cases which are (or aren’t) listed within the CSV file.

choose

ec2.proprietor,

case

when csv.proprietor is null then 'false'

else 'true'

finish as is_listed

from

(choose distinct tags ->> 'proprietor' as proprietor

from aws.aws_ec2_instance) ec2

left be a part of

csv.ec2_owner_tags csv on ec2.proprietor = csv.proprietor;

proprietor | is_listed

----------------+-----------

Dwight Schrute | true

Michael Scott | falseThroughout the suite of plug-ins there are greater than 2,300 predefined fixed-schema tables that you should use in these methods, plus a limiteless variety of dynamic tables. And new plug-ins are continually being added by Turbot and by Steampipe’s open supply group. You may faucet into this ecosystem utilizing Steampipe or Turbot Pipes, from your personal Postgres or SQLite database, or instantly from the command line.